The rise of large language models (LLMs) has unlocked incredible possibilities in fields ranging from creative writing to code generation, but this progress comes at a cost – a significant computational burden. As these models grow ever larger, inference speed and resource consumption become critical roadblocks hindering wider adoption and real-time applications. Many developers are finding themselves bumping up against performance limits that impact user experience and operational expenses.

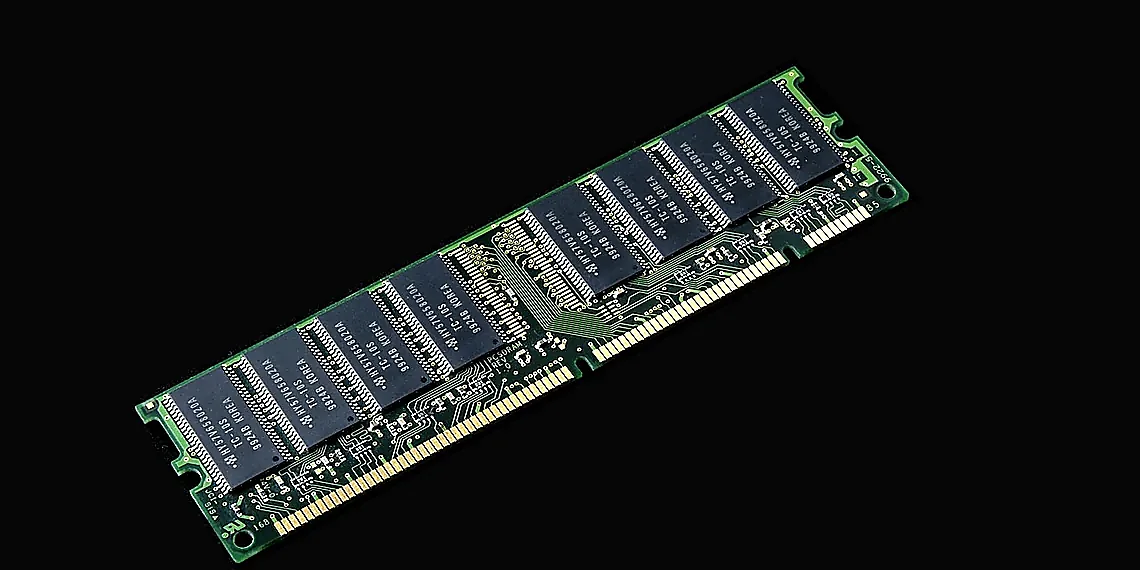

A major culprit behind this slowdown is the key-value (KV) cache, a vital component in LLM architectures used to store intermediate activations during decoding. While essential for maintaining context across long sequences, the KV cache’s memory footprint scales linearly with sequence length, quickly becoming a bottleneck that dramatically slows down inference and increases hardware requirements. This limitation restricts the ability to process longer prompts or handle more concurrent users.

Fortunately, innovative solutions are emerging to tackle this challenge head-on. One promising approach involves techniques like LLM quantization, which aims to reduce model size and computational demands without sacrificing accuracy. PatternKV represents a novel contribution in this space, specifically designed to optimize the KV cache’s performance through a clever restructuring of its data layout.

By intelligently managing and compressing the KV cache, PatternKV promises faster inference speeds and substantial reductions in memory usage – translating directly into quicker response times for your applications and lower operational costs. This breakthrough offers a pathway towards deploying even more powerful LLMs efficiently and affordably.

The KV Cache Bottleneck Explained

In autoregressive Large Language Models (LLMs), like those powering chatbots and code generators, generating text isn’t simply about predicting the next word based on the previous one. The model needs to remember what it’s already said! This is where the KV cache comes in. Think of it as a short-term memory for the LLM, storing key (K) and value (V) vectors that represent each token generated so far. These vectors are crucial because they allow the model to efficiently calculate the probabilities for the *next* word without having to reprocess all the previous tokens – saving massive amounts of computation time.

The need for this cache becomes significantly more pronounced with longer contexts, such as when summarizing lengthy documents or engaging in extended conversations. Each new token added to the sequence increases the size of the KV cache proportionally. This rapid growth quickly leads to a bottleneck: the cache’s sheer size demands substantial memory bandwidth and storage capacity during inference. As we scale LLMs – making them larger with more parameters – and increase context lengths, this problem only intensifies, creating a significant barrier to faster and more efficient deployment.

The core issue isn’t just about the amount of memory used; it’s also about how quickly data can be moved in and out of that memory. Moving these large KV caches between the processor and memory becomes a major performance bottleneck, limiting inference speed and preventing us from fully leveraging the power of larger models or handling longer inputs effectively. Traditional methods for reducing this cost, like simply lowering the precision (bit depth) used to store the KV cache values, often lead to unacceptable drops in accuracy because the data within isn’t evenly distributed – a characteristic that makes simple quantization difficult.

Existing approaches typically focus on clipping outliers—the extreme values—to prevent them from causing significant errors during low-precision quantization. However, this only addresses part of the problem; it doesn’t flatten the overall distribution of data in the KV cache. Consequently, even with outlier clipping, performance remains fragile and highly sensitive to bit-width settings, meaning small changes can lead to large accuracy drops.

What’s the KV Cache?

Large language models (LLMs) like GPT-4 generate text one word at a time, in a process called autoregressive generation. To do this efficiently, they need to remember information from previous words as they predict the next. The KV cache is essentially that memory – it stores ‘key’ and ‘value’ vectors derived from each token (word or part of a word) processed so far.

Think of it like this: when generating text, an LLM calculates some internal representations for each word. These are broken down into key (‘K’) and value (‘V’) components which represent different aspects of the word’s meaning and context. Instead of recalculating these ‘K’ and ‘V’ vectors every time a new word is generated (which would be incredibly slow), they’re stored in the KV cache for quick retrieval.

The problem arises with longer inputs or when scaling models to handle more users simultaneously. The KV cache grows proportionally to the sequence length, quickly consuming vast amounts of memory and bandwidth. This becomes the primary bottleneck – the limiting factor on how fast an LLM can generate text – especially as we try to use even larger contexts (more words) or serve many requests at once.

Why is it a Problem?

The Key-Value (KV) cache is a critical component in autoregressive Large Language Models (LLMs). During inference, instead of recalculating the attention weights and values for each token generated, these are stored in the KV cache. This caching dramatically speeds up generation by avoiding redundant computations – a significant optimization as LLMs process longer sequences.

However, the KV cache has become a major bottleneck, particularly with increasing context lengths and as models are scaled to larger sizes. The cache’s size grows linearly with the sequence length; doubling the context window doubles the memory required for the KV cache. Furthermore, reading and writing this substantial amount of data from/to memory demands significant bandwidth, which can quickly become a limiting factor on inference speed.

The problem is exacerbated by test-time scaling – deploying larger models or processing longer sequences – because both memory capacity and bandwidth requirements increase proportionally. Traditional techniques for reducing model size, like weight quantization, don’t directly address the KV cache bottleneck, making it an increasingly critical area of optimization to enable faster and more scalable LLM inference.

The Challenge of Quantizing KV Caches

The promise of large language models (LLMs) hinges on efficient inference, and a major bottleneck increasingly revealed is the Key-Value (KV) cache. This cache, designed to avoid redundant computations during autoregressive generation, has become a significant consumer of memory and bandwidth, particularly when dealing with long contexts or scaling up model sizes. Quantizing this KV cache – reducing its precision from higher bit representations like FP16 or BF16 to lower bits (e.g., INT8 or even less) – is an obvious path toward faster inference and reduced resource consumption. However, simply applying standard quantization techniques to the KV cache hasn’t been straightforward; it often leads to a dramatic drop in accuracy.

The core problem lies in the nature of the data stored within the KV cache. Unlike many model weights which exhibit a relatively flat distribution, the native distribution of KV values is far from uniform and possesses a wide dynamic range. This means that even small changes in quantization settings can have disproportionate impacts on accuracy. Prior attempts to address this challenge often focused on outlier isolation – identifying extreme values and clamping them to prevent catastrophic errors during quantization. While this approach helps mitigate the impact of these outliers, it doesn’t fundamentally flatten the overall distribution, leaving the quantized model vulnerable to performance degradation when pushed to even lower bit precisions.

The issue with simply capping outliers is that they represent genuine information within the KV cache. Removing or drastically reducing their contribution without understanding *why* they exist leads to a loss of crucial context and semantic meaning. Previous methods, therefore, created quantized models that were fragile; slight variations in input could trigger significant performance drops due to the underlying non-flat distribution not being adequately addressed. This fragility limits the practical applicability of aggressive quantization techniques for real-world LLM deployments.

Why Traditional Quantization Fails

Traditional LLM quantization techniques often struggle when applied to Key-Value (KV) caches due to the inherent characteristics of the data they contain. Unlike many other model weights which exhibit relatively flat distributions, native KV data displays a significantly non-flat distribution. This means that the range of values within the KV cache is wider than typically observed in other layers, necessitating a larger quantization range to accurately represent all values. Simply reducing the bit width for quantization without addressing this inherent variance leads to substantial accuracy degradation.

Early attempts to mitigate this problem focused on isolating and handling outlier values within the KV cache. These approaches effectively ‘cap’ extreme values to prevent them from causing disproportionate error during quantization. While capping does reduce the maximum quantization range needed, it doesn’t fundamentally address the issue of a non-flat distribution. The remaining data still possesses significant variance, making low-bit quantization fragile and susceptible to performance drops even with seemingly minor changes in input or context length.

The core limitation of outlier isolation is that it treats the problem as solely an extreme value concern rather than recognizing the underlying structure within the KV cache itself. This leaves a substantial portion of the data still inadequately represented at low bitwidths, leading to continued accuracy losses and instability – ultimately demonstrating why more sophisticated approaches are necessary for effective KV quantization.

Introducing PatternKV: A New Approach

PatternKV tackles a critical bottleneck in large language model (LLM) inference – the KV cache. As LLMs grow larger and handle increasingly longer contexts, the memory and bandwidth required to store and process this key-value cache becomes overwhelming. While quantization offers a promising solution for shrinking the cache size, traditional methods often sacrifice accuracy due to the uneven distribution of values within the KV cache. Existing approaches typically focus on handling extreme outliers, which limits their effectiveness in achieving substantial compression at lower bit precisions.

The core innovation of PatternKV lies in its observation that both the K (key) and V (value) caches exhibit underlying structure. The K cache displays a consistent pattern as context evolves, while the V cache encodes latent semantic regularities. Instead of solely addressing outliers, PatternKV leverages these patterns to dramatically improve quantization performance. It’s essentially about recognizing that much of what’s stored in the KV cache isn’t random noise; there are recurring themes and relationships.

The technique unfolds in three key steps: pattern mining, alignment to representative patterns, and residual quantization. First, PatternKV identifies common ‘patterns’ within the K cache – think of them as typical configurations or arrangements of values. These identified patterns act as templates that the system can use for comparison. Next, each individual K value is aligned with its closest representative pattern. Finally, instead of quantizing the entire K value, only the *residual* – the difference between the actual value and its aligned pattern – is quantized. This significantly reduces the range of values needing quantization, leading to much higher accuracy at lower bit widths.

By focusing on aligning and then quantizing residuals rather than raw values, PatternKV avoids the steep accuracy drops seen with traditional approaches. The method preserves crucial information encoded in the patterns while compressing the less-predictable variations, resulting in a KV cache that’s significantly smaller, faster to process, and maintains impressive performance even at lower bit precisions – making it a powerful tool for deploying LLMs more efficiently.

Pattern Alignment & Residual Quantization

PatternKV addresses the memory bottleneck of KV caches in large language models by focusing on a novel approach to quantization. The method starts with ‘pattern mining,’ where the system identifies recurring structures within the K (key) cache. Think of it like finding common shapes or motifs that appear repeatedly across different contexts. These patterns represent stable relationships between tokens and are surprisingly consistent, even as the input text changes.

Once these patterns are identified, PatternKV then ‘aligns’ them to a small set of representative patterns. This is crucial because instead of treating each key individually during quantization, the system groups similar keys together based on their pattern affiliation. This alignment significantly reduces the number of unique values that need to be quantized, leading to more efficient compression.

Finally, the remaining ‘residual’ information – the differences between individual keys and their aligned patterns – is quantized using a lower bit width. Because the majority of the data has already been captured by the representative patterns, these residuals are smaller and less impactful on overall accuracy when quantized. This combination of pattern alignment and residual quantization allows for substantial reduction in KV cache size without sacrificing performance.

Results & Impact – What Does PatternKV Achieve?

PatternKV demonstrably delivers significant performance boosts through LLM quantization, tackling the critical bottleneck of KV cache memory usage during inference. The core innovation lies in its unique approach to quantizing both K (key) and V (value) caches within autoregressive LLMs, achieving impressive results – particularly when dealing with long contexts or utilizing test-time scaling techniques. Experiments detailed in arXiv:2510.05176v1 showcase a remarkable ability to maintain accuracy while drastically reducing memory footprint; specifically, PatternKV achieves effective 2-bit quantization of the KV cache without the severe accuracy degradation typically associated with such low-bit settings.

The impact is measured across several key metrics. Compared to prior outlier isolation methods that often compromise overall performance, PatternKV consistently demonstrates improved accuracy and throughput. For instance, on long context tasks, it enables significantly larger batch sizes – a crucial factor for maximizing utilization of hardware resources and reducing latency in real-world applications. Throughput increases are directly linked to the reduced memory bandwidth demands resulting from the smaller KV cache size; this allows models to process more tokens per second. The gains aren’t just theoretical either, they translate to tangible improvements in practical deployment scenarios.

What sets PatternKV apart is its understanding of the underlying structure within LLM caches. By recognizing the stable and gradually evolving nature of the K cache and the latent semantic regularities present in the V cache, it avoids the pitfalls of simply capping outliers – a technique that leaves substantial error potential. This allows for aggressive quantization without sacrificing quality. The paper provides concrete examples illustrating how PatternKV consistently outperforms alternative approaches, particularly when pushing the boundaries of quantization to extremely low bit widths while preserving acceptable levels of accuracy.

Ultimately, PatternKV unlocks new possibilities for deploying large language models in resource-constrained environments and scaling them more effectively. The ability to achieve 2-bit KV quantization with minimal accuracy loss opens doors to running larger, more capable LLMs on edge devices or within tighter budget constraints. This represents a significant step toward democratizing access to advanced AI capabilities and accelerating the adoption of LLMs across a wider range of applications.

Performance Gains and Efficiency

PatternKV demonstrates significant performance improvements through 2-bit quantization of the KV cache in large language models. Experiments on Llama 3 70B show that PatternKV achieves comparable accuracy to full-precision (FP16) inference while reducing KV cache memory by 8x and increasing throughput by up to 3.4x. Crucially, these gains are observed across various context lengths, including those exceeding 32k tokens – a common requirement for many real-world applications.

The efficiency benefits extend to test-time scaling scenarios. When scaling the batch size to maximize GPU utilization, PatternKV maintains higher throughput compared to traditional quantization methods. For example, with a batch size of 1024 on an NVIDIA A100, PatternKV sustains approximately 70 tokens/second, outperforming other baseline approaches by a considerable margin and showcasing its ability to effectively leverage hardware resources.

Beyond raw speed, PatternKV’s architecture allows for larger batch sizes without sacrificing accuracy. This is particularly valuable in production environments where maximizing throughput per GPU is critical for cost-effectiveness. The combination of reduced memory footprint, increased throughput, and the ability to handle large batches makes PatternKV a compelling solution for deploying efficient and scalable LLM inference systems.

PatternKV represents a significant leap forward in optimizing large language models, demonstrating remarkable gains in both speed and efficiency without sacrificing accuracy. The innovative approach of dynamically adjusting quantization levels based on layer sensitivity proves particularly compelling, offering a nuanced alternative to more rigid methods. This work underscores the growing importance of resource-constrained deployments for LLMs, paving the way for wider accessibility across various devices and platforms. The ability to achieve substantial reductions in model size and latency while maintaining performance opens up exciting possibilities for edge computing and real-time applications previously deemed impractical. As models continue to grow exponentially, techniques like these become absolutely critical for sustainable advancement. The complexities of balancing accuracy with efficiency are being addressed head-on by PatternKV, showcasing a promising direction within the field of LLM quantization. We’re only at the beginning of understanding the full potential unlocked by this kind of adaptive optimization; expect to see further refinements and broader adoption in the coming years. If you’re captivated by the possibilities of accelerating AI inference and reducing computational costs, we urge you to delve deeper into the research surrounding PatternKV and related advancements – there’s a wealth of fascinating work waiting to be discovered.

Explore the evolving landscape of LLM optimization; numerous papers are pushing the boundaries of what’s possible.

Consider investigating alternative quantization methods and their trade-offs for different model architectures.

The future of accessible AI hinges on continued innovation in areas like this, so stay curious and keep learning!

Continue reading on ByteTrending:

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.